So for a while at the office we’ve had a VERY annoying problem with some of our higher-end laptops we use for STEM classes. It’s a problem that hasn’t really been reported by the end users, only by us in the Tech Office directly. We have some Dell Precision M2800s with rather decent specs (which I won’t delve much into right now) except to say they do have marvelous 256GB Solid-State Drives installed in them.

When we first got them, we marveled at the speed of the devices. They were blazing fast. Everyone at the office wanted one. We were strangely confused to some problems deploying a few packages but largely chalked it up to problems with the imaging servers (which were getting a little on the old side).

A few weeks after that we had to re-image the first one and the problems became evident. Jobs that took ~5 minutes to run on a different model laptop were taking 30 minutes to run on these (beefier) laptops. We were at a loss.

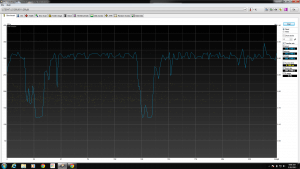

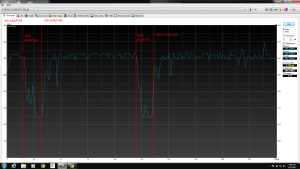

That being said, we largely pushed the problem aside (because there are bigger fish to fry on any given day!). Today we had some time to delve into it, and we figured it out real quick all things considered. I’ll let you see the picture which is probably enough proof.

Figured it out yet? Hint: power! Still nothing? Ok.

That’s right.

Using any AC adapter below 130W on the M2800 will result in the machine yelling at you during power up, for good reason. It’s not powerful enough.

If you use a 65W or a 90W AC adapter, the drive (and CPU) both clock themselves down in order to get the battery to charge.

Frustrating, to say the least. “OMG, DUH” as my coworker politely put it.

The moral of the story: Use the wattage that came with your laptop, or you might experience otherwise un-explainable performance drops.

God damn, we are the dumbest smart people I know.